What just happened? As soon as Google became heavily involved in generative AI, it was only a matter of time before the company began using the technology to display responses to search engine queries. That day has arrived, as Google will now attempt to organize and contextualize results using its Gemini AI. Unfortunately, typical issues involving hallucinations and other AI-related concerns emerged on day one.

Google searches in the US will now begin displaying AI-generated summaries of results, which will help the search engine answer complex questions. The company plans to roll out the feature to other countries in the coming months, with the goal of reaching a billion users by the end of 2024.

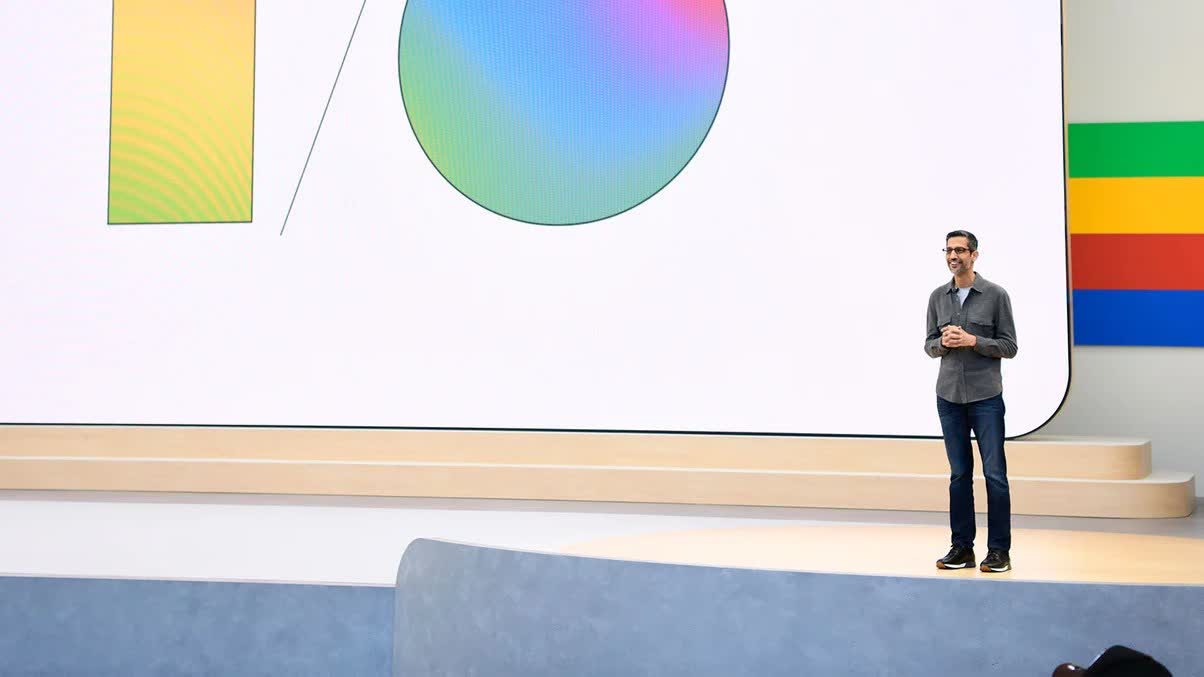

AI Overviews leverage Google’s Gemini AI, formerly called Bard, to create detailed responses to multi-layered questions. The company’s presentation (above) shows users looking for certain kinds of businesses in specific locations or asking for guides to various tasks. The search engine replies with multi-step answers sourced from the top search results, which might reduce the number of individual searches users need to make.

After receiving an initial result, users can make small changes to queries to continue conversing with Gemini and refine their results. A future update will add the ability to ask the search engine for simpler or more detailed answers.

Addressing concerns that AI Overview will steal website traffic by copying their information into the results page, similar to Google search excerpts, the company claims that the new feature drives users toward a wider variety of sites. Testing supposedly indicated that sites referenced in AI Overview received more clicks which sounds counterintuitive (and simply not true). It doesn’t address Google’s use of websites’ content without their permission either.

Using the example in your blog post – which I presume someone at Google must have looked at before putting in a blog post – we have AI answers leading to AI answers.

What a weird timeline. pic.twitter.com/CKG3kX3PDD

– Glen Allsopp �’� (@ViperChill) May 14, 2024

However, soon after Google unveiled the new search functionality, users pointed out that the AI often pulls information from Quora, which also uses AI. Thus, whenever a human asks Google a question, there’s a good chance the AI will simply ask another AI, creating a potentially alarming feedback loop and casting further doubt on the integrity of the results.

Furthermore, The Verge spotted a critical error that Gemini made in one of the Google presentation demos. The new Gemini search function tries to analyze user-uploaded videos as context for search queries, and the presentation used a video and question about a broken camera as an example. Unfortunately, one of the AI’s suggestions involved opening the camera’s back door and removing the film.

What Gemini neglected to mention is that this should only be done in a dark room; otherwise, light exposure would ruin the film. This indicates that Google has yet to solve the hallucination problem that has plagued generative AI since its inception.